Small Language Models vs Large Language Models: What’s the Difference?

AI Generated. Credit: Google Gemini

The meteoric rise of artificial intelligence has fundamentally changed how we approach problem-solving in the digital age. In just a few short years, we have transitioned from simple predictive text to systems capable of passing bar exams and diagnosing rare medical conditions. As AI adoption accelerates, the discussion around Small Language Models vs Large Language Models has become increasingly important. However, as the initial wow factor of AI settles, a more practical conversation has emerged in boardrooms across America. Business leaders are no longer just asking what AI can do; they are asking what it costs and how it fits into their specific infrastructure.

This shift in perspective is why businesses are evaluating model size with such scrutiny. While the early days of the AI boom were defined by a “bigger is better” mentality, 2026 has ushered in a growing need for cost-efficient AI solutions that don’t compromise on security or speed.

Navigating the choice of Small Language Models vs Large Language Models is no longer a niche technical debate: it is a cornerstone of modern corporate strategy.

What Are Language Models?

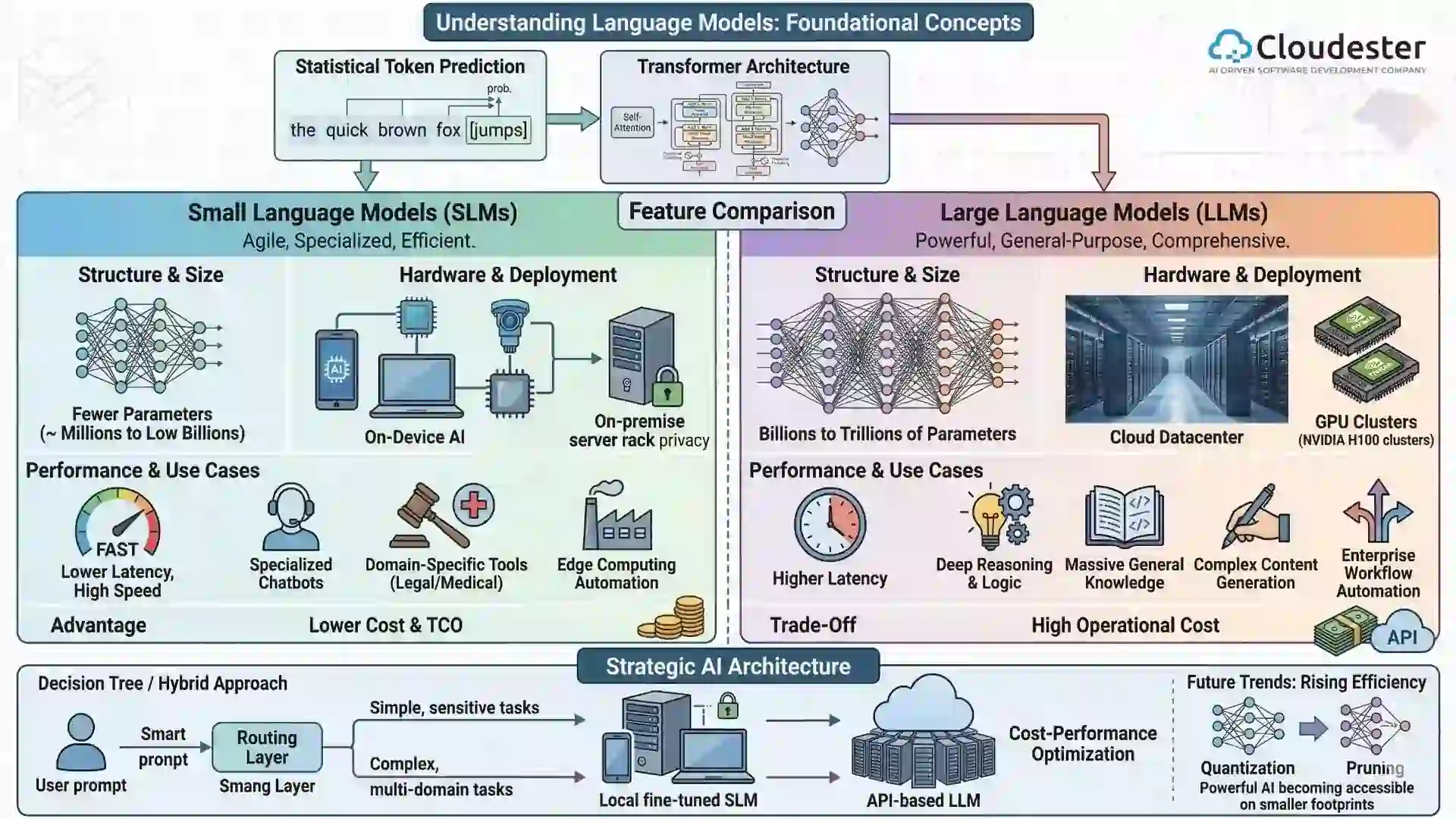

To understand the difference in scale, we first have to look at what these models actually are. At their simplest, language models are mathematical engines trained to understand and generate human language. They function by predicting the most statistically likely next token (a word or part of a word) in a sequence.

Modern models are built on the Transformer architecture, which revolutionized the field by allowing models to process entire sentences at once rather than word-by-word. This evolution from early NLP models to the current generation of LLMs was fueled by a massive increase in training data and an explosion in parameters.

Parameters are essentially the internal variables that the model adjusts during its training phase to learn patterns, nuances, and facts.

What Are Small Language Models (SLMs)?

Small Language Models are the agile, high-performance athletes of the AI world. While they may lack the sheer encyclopedic volume of their larger counterparts, they are engineered for precision and speed.

Definition

SLMs are models with significantly fewer parameters, typically ranging from a few million to the low billions (usually under 15 billion).

Key Characteristics

- Lightweight Architecture: These models require far less memory and storage.

- Faster Inference Time: Because there are fewer mathematical calculations per request, the response is nearly instantaneous.

- Lower Computational Cost: You don’t need a room full of specialized chips to run them.

- Easier Fine-Tuning: It is much faster and cheaper to teach an SLM your specific company brand voice or technical jargon.

Common Use Cases

- Chatbots: Handling standard customer service inquiries at high speed.

- On-device AI: Powering features on smartphones or laptops without needing a cloud connection.

- Domain-specific Assistants: Serving as a specialized tool for a legal firm or a medical clinic.

- Edge Computing: Running AI on local hardware in manufacturing plants or retail stores.

Advantages

The primary draw of an SLM is that it is cost-efficient and allows for faster deployment. Furthermore, they are incredibly data privacy-friendly because they can be hosted entirely on a local server, ensuring sensitive information never leaves your building.

Limitations

The trade-off is a limited reasoning capability. An SLM might struggle with highly abstract logic or tasks that require deep contextual depth across unrelated subjects.

Custom AI Software Development Solution For Enterprises

What Are Large Language Models (LLMs)?

If an SLM is a specialist, a Large Language Model is a polymath. These are the giants like GPT-4 or Gemini that captured the world’s imagination.

Definition

These are models with billions or even trillions of parameters, trained on petabytes of diverse data.

Key Characteristics

- Massive Training Datasets: They have read almost everything, from Shakespeare to Python documentation.

- Advanced Reasoning: They can connect disparate ideas and perform complex, multi-step logical tasks.

- Broad General Knowledge: They can pivot from writing a marketing email to explaining quantum physics in seconds.

Common Use Cases

- Advanced Conversational AI: Handling nuanced, unpredictable human interactions.

- Content Generation: Drafting long-form reports or creative storytelling.

- Research Assistance: Synthesizing vast amounts of unstructured data into actionable insights.

- Enterprise Automation: Orchestrating complex workflows across multiple software departments.

Advantages

The strength of an LLM lies in its high accuracy and strong contextual understanding. They are multi-task capable by nature, meaning one model can serve ten different departments effectively.

Limitations

The downside is that they are incredibly expensive to train and run. They often suffer from high latency and require massive infrastructure needs, usually forcing companies to rely on third-party cloud providers.

Small Language Models vs Large Language Models: Core Differences

| Feature | Small Language Models | Large Language Models |

|---|---|---|

| Parameters | Millions to a few billions | Billions to trillions |

| Cost | Low | High |

| Speed | Fast | Slower |

| Accuracy | Moderate (High if specialized) | High (General purpose) |

| Infrastructure | Minimal (Standard servers) | Heavy (NVIDIA H100 Clusters) |

| Best For | Specific tasks | Complex reasoning |

Performance Comparison: Accuracy vs Efficiency

There is a fundamental trade-off between size and performance. While a massive model is generally more intelligent, there are many scenarios when smaller models outperform larger ones.

For instance, a fine-tuned SLM trained exclusively on a company’s proprietary data will often provide more accurate and relevant answers than a general-purpose LLM that has only a surface-level understanding of that industry.

Cost Comparison: Training and Deployment

The Total Cost of Ownership (TCO) is where the two diverge most sharply.

- GPU Cost: LLMs require clusters of high-end GPUs that cost tens of thousands of dollars each.

- Cloud Infrastructure: Running a massive model via API can lead to unpredictable monthly bills.

- ROI Considerations: For many businesses, the marginal increase in intelligence provided by an LLM doesn’t justify the 10x increase in cost.

We specialize in helping organizations navigate these financial waters, ensuring that the AI architecture chosen actually reflects the company’s bottom-line goals rather than just following the latest trend.

Use Case-Based Comparison

- For Startups: SLMs are often ideal because they allow for rapid prototyping and low overhead.

- For Enterprises: LLMs are beneficial when the goal is a centralized brain that can assist with high-level strategy and research.

- For Edge & Mobile AI: SLMs dominate this space due to hardware limitations.

- For Research & Deep Reasoning: LLMs perform better when the task requires connecting dots that are far apart.

When Should You Choose a Small Language Model?

You should lean toward an SLM if you have strict budget constraints or very specific data privacy requirements. They are the go-to choice for a specific domain task where low-latency needs are non-negotiable, such as a real-time voice assistant in a retail environment.

When Should You Choose a Large Language Model?

An LLM is the right choice when you need broad knowledge and the ability to handle complex reasoning. If your project involves multi-domain tasks or requires the highest possible accuracy requirement across a wide range of topics, the investment in a large model is justified.

Hybrid Approach: Combining Small and Large Models

The most sophisticated AI strategies in 2026 don’t actually choose just one. Instead, they use a hybrid approach.

This might involve Model Distillation, where a large model is used to teach a smaller one, or a system where a fine-tuned domain SLM handles 90% of requests while an API-based LLM is called only for the most difficult 10%. This cost-performance optimization strategy provides the best of both worlds.

Future Trends in Language Models

The future is leaning heavily toward the rise of efficient AI. Techniques like Quantization and Pruning are allowing us to shrink massive models without losing their smarts.

We are seeing a boom in domain-specific AI models that favor depth over breadth. This shift represents a true AI democratization, where smaller players can compete with tech titans.

Also read: Generative AI vs. Agentic AI: What’s the Key Difference?

Conclusion

When it comes to the debate of Small Language Models vs Large Language Models, there is no one-size-fits-all solution. The right decision depends entirely on your specific use case, your available budget, and the scale at which you plan to operate.

The team at Cloudester Software understands that in the modern economy, smart AI architecture beats size alone. By choosing a model that fits your needs precisely, you ensure that your AI isn’t just a flashy experiment, but a sustainable engine for growth.